Organisations worldwide are converging on 2025 as the pivotal moment when AI must convert hype into measurable returns. A key success factor lies in how companies orchestrate change management, ensuring AI initiatives are purposefully aligned with strategic goals and earn genuine buy-in from everyone involved. This alignment is what bridges the AI Delta – the gap between success with AI and value loss from poor execution.

Understanding the AI Delta

The AI Delta stems from a fundamental misalignment between AI adoption and business objectives. Companies often invest in AI but fail to integrate it effectively, leading to underutilised capabilities, unfulfilled expectations and missed ROI.

Without strong change management, AI risks becoming a disjointed initiative rather than a catalyst for transformation. Bridging this gap requires a structured approach that prioritises both technical implementation and the human factors essential for adoption.

Addressing resistance to AI

Change management is especially vital in addressing some of the biggest challenges associated with AI adoption. Resistance often stems from fears of job displacement or distrust in data privacy measures. Employees need more than just reassurance; they require practical upskilling – comprehensive training on AI fundamentals, data analysis and emerging tools – to feel confident and motivated to embrace new technology.

Resistance to AI adoption isn’t just about job security fears. Executives may hesitate due to unclear ROI, while employees might struggle with unfamiliar tools. Organisational silos can also slow adoption, as different departments fail to align on AI-driven processes. Addressing these concerns requires proactive change management that tailors messaging and training to different stakeholder groups.

Additionally, placing ethics at the centre of AI programmes is non-negotiable. By enacting clear governance frameworks and tackling data biases head-on, leaders can foster a culture of transparency and mutual trust with stakeholders.

Ethical AI and governance mechanisms

Ethical AI implementation hinges on three core pillars:

- Transparency: Clear communication on AI’s decision-making process to build trust

- Accountability: Defined roles for monitoring AI outputs and ensuring responsible usage

- Fairness: Proactive steps to detect and mitigate biases in AI models, preventing unintended discrimination

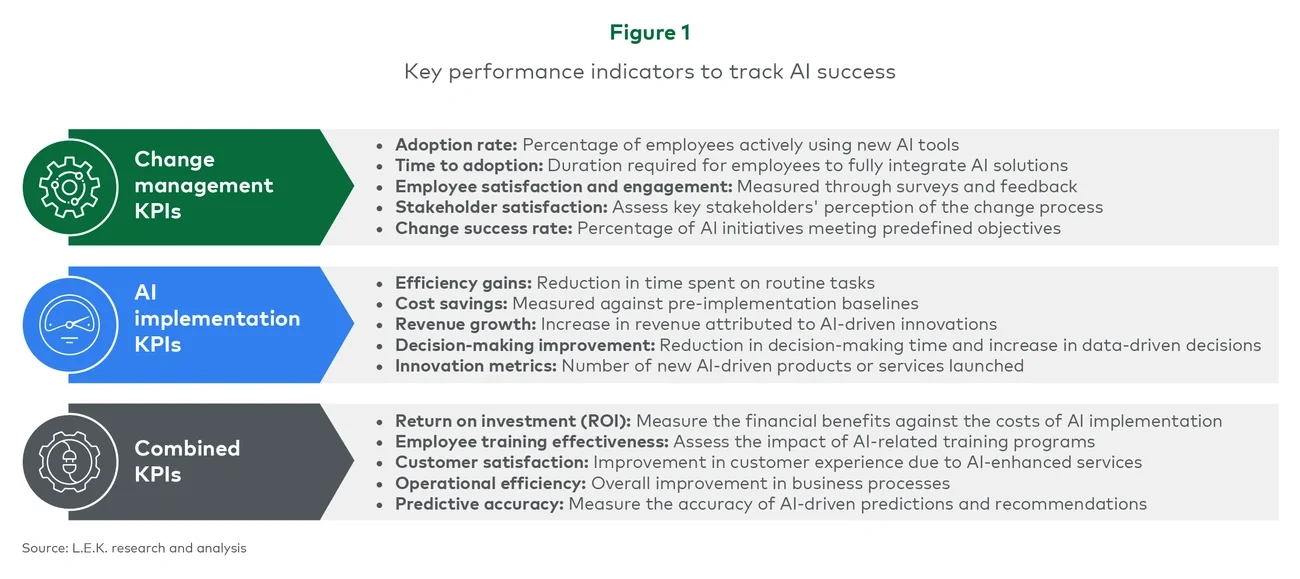

Critically, organisations must measure the difference AI makes (see Figure 1). Key indicators of success include the speed and extent of employee uptake, the overall efficiency gains achieved and any jump in data-driven decision-making.

Cost savings against pre-implementation benchmarks and tangible revenue boosts from AI-driven products also speak volumes about how effectively teams are capitalising on automation and insights.